Merge pull request !557 from MingzhenWang/setup1 |

||

|---|---|---|

| ci | ||

| examples | ||

| megatron | ||

| modellink | ||

| sources/images | ||

| tasks | ||

| tests | ||

| tools | ||

| .gitignore | ||

| LICENSE | ||

| OWNERS | ||

| README.md | ||

| README_en.md | ||

| SECURITY.md | ||

| pretrain_gpt.py | ||

| public_address_statement.md | ||

| requirements.txt | ||

| setup.py | ||

README_en.md

简体中文 | English

ModelLink provides end-to-end solutions for large language models on Ascend chips, including models, algorithms, kernels, and tasks.

Why ModelLink?

Prepared Models

Currently, the following models has been supported:

- Aquila-[README: 7B]

- Baichuan-[README: 7B/13B]

- Bloom-[README: 7B/176B]

- InternLM-[README: 7B/65B]

- LLaMA-[README: 7B/13B/33B/65B]

- LLaMA2-[README: 7B/13B/34B/70B]

- Baichuan2-[README: 7B/13B]

Downstream Tasks

Currently, the following downstream tasks have been supported:

- Instruction/Pretraining dataset support

- Low-parameter fine-tuning

- Inference: human-machine dialogue

- Evaluation with numerous benchmarks

Acceleration Features

Currently, the following acceleration features for LLMs have been supported:

- Tensor parallelism

- (Virtual & Optimized) Pipeline parallelism

- Fold-3D

- Recomputation

- Sequence parallelism

- ZeRO-1/2

- Inverted triangle acceleration

- Optimizers

- Merged feed-forward network

- Gradient accumulation

- Memory overcommitment

More novel and useful features are developing for LLMs training on Ascend ...

Fused Kernels

Coming soon ...

Quick Start For Model Training

Model Performance

| Model | Size | Node | Mode | NPU | Ref. | Loss | Scripts |

|---|---|---|---|---|---|---|---|

| Aquila | 7B | 1x8 | FP16 | 3394 | 4078 | Loss | Train |

| Baichuan | 7B | 1x8 | FP16 | 2350 | 2036 | Loss | Train |

| 13B | 1x8 | FP16 | 1016 | 824 | Loss | Train | |

| Baichuan2 | 7B | 1x8 | BF16 | 2607 | 3936 | Loss | Train |

| 13B | 2x8 | BF16 | 852 | 872 | Loss | Train | |

| Bloom | 7B1 | 1x8 | FP16 | 2611 | 2525 | Loss | Train |

| 176B | 12x8 | BF16 | 112 | 107 | Loss | Train | |

| InternLM | 7B | 1x8 | BF16 | 2943 | 4078 | Loss | Train |

| 65B | 4x8 | BF16 | 342 | 414 | Loss | Train | |

| LLaMA | 7B | 1x8 | FP16 | 3763 | 3804 | Loss | Train |

| 13B | 1x8 | FP16 | 1894 | 2012 | Loss | Train | |

| 33B | 4x8 | FP16 | 621 | 776 | Loss | Train | |

| 65B | 4x8 | ||||||

| BF16 | 348 | 426 | Loss | Train | |||

| LLaMA2 | 7B | 1x8 | BF16 | 2662 | 2884 | Loss | Train |

| 13B | 1x8 | BF16 | 1550 | 1750 | Loss | Train | |

| 34B | 2x8 | BF16 | 690 | 796 | Loss | Train | |

| 70B | 8x8 | BF16 | 350 | 339 | Loss | Train |

Model Training Software

| Software | config |

|---|---|

| Python | 3.8.18 |

| driver | 2023Q4 Commercial Version |

| firmware | 2023Q4 Commercial Version |

| CANN | 2023Q4 Commercial Version |

| binary arithmetic package | 2023Q4 Commercial Version |

| torch | 2.1.0 |

| torch_npu | 2023Q4 Commercial Version |

Downstream Tasks

Content List

| Model | Size | Fine-tuning | Inference | Evaluation | Dataset Support |

|---|---|---|---|---|---|

| Aquila | 7B | -- | inference | evaluation | alpaca_data.json |

| Baichuan | 7B | -- | -- | -- | alpaca_data.json |

| 13B | lora | inference | evaluation | alpaca_data.json | |

| Bloom | 7B1 | lora | inference | evaluation | alpaca_data.json |

| 176B | -- | inference | evaluation | alpaca_data.json | |

| InternLM | 7B | -- | inference | evaluation | alpaca_data.json |

| LLaMA | 7B | lora | inference | evaluation | alpaca_data.json |

| 13B | lora | inference | evaluation | alpaca_data.json | |

| 33B | lora | inference | evaluation | alpaca_data.json | |

| 65B | -- | inference | -- | alpaca_data.json | |

| LLaMA2 | 7B | lora | inference | evaluation | alpaca_data.json |

| 13B | -- | inference | evaluation | alpaca_data.json | |

| 34B | -- | inference | evaluation | alpaca_data.json | |

| 70B | -- | inference | evaluation | alpaca_data.json |

Instruction/Pretraining dataset support

Quick Start

# for llama, download alpaca dataset, like

wget https://huggingface.co/datasets/tatsu-lab/alpaca/resolve/main/data/train-00000-of-00001-a09b74b3ef9c3b56.parquet

# download tokenizer configs and (selective) weights from

# https://huggingface.co/yahma/llama-7b-hf/tree/main

# revise "LLaMATokenizer" as "LlamaTokenizer" in tokenizer_config.json (This is a bug of huggingface)

mkdir dataset

python tools/preprocess_data.py --input train-00000-of-00001-a09b74b3ef9c3b56.parquet \

--output-prefix dataset/alpaca \

--tokenizer-type PretrainedFromHF \

--tokenizer-name-or-path llama-7b-hf \

--tokenizer-not-use-fast \

--handler-name GeneralInstructionHandler

Preprocessing pretraining dataset

wikipedia dataset

- download wikipedia data from huggingface to WORKSPACE/wikipedia

- download llama tokenizer model and config from huggingface to WORKSPACE/llama-7b-hf

- use preprocessing script to preprocess wikipedia data

# We assume that data and tokenizer has already been downloaded to WORKSPACE.

cd WORKSPACE

mkdir wikipedia_preprocessed

# specify huggingface load_dataset parameters.(--input param will be ignored)

# these params will just be feed into datasets.load_dataset function

hf_config_json="./hf_config_json.json"

cat <<EOT > $hf_config_json

{

"path": "WORKSPACE/wikipedia",

"name": "20220301.en",

"streaming: True,

"split": "train"

}

EOT

python tools/preprocess_data.py \

--input "WORKSPACE/wikipedia" \

--hf-datasets-params ${hf_config_json} \

--output-prefix WORKSPACE/wikipedia_preprocessed/wikipedia \

--dataset-impl mmap \

--tokenizer-type PretrainedFromHF \

--tokenizer-name-or-path WORKSPACE/llama-7b-hf \

--tokenizer-not-use-fast \

--streaming \

--workers 8

After preprocessing, there will be a wikipedia_text_document.bin and a wikipedia_text_document.idx in the WORKSPACE/wikipedia_preprocessed dictionary.

Then, we can train a model with --data-path WORKSPACE/wikipedia_preprocessed/wikipedia_text_document flag.

Note that datasets in huggingface have a format like this. The name of the text field of the dataset can be changed by using the --json-key flag which default is text.

In wikipedia dataset, it has four columns, including id, url, title and text, where we can choose a column used for training by --json-key flag.

alpaca dataset

Besides, we can also use alpaca dataset for pretraining as below.

python tools/preprocess_data.py --input WORKSPACE/train-00000-of-00001-a09b74b3ef9c3b56.parquet \

--output-prefix WORKSPACE/alpaca_preprocessed/alpaca \

--tokenizer-type PretrainedFromHF \

--tokenizer-name-or-path WORKSPACE/llama-7b-hf \

--tokenizer-not-use-fast \

--json-key text

Preprocessing instruction dataset

alpaca dataset

# for llama, download alpaca dataset, like

# wget https://huggingface.co/datasets/tatsu-lab/alpaca/resolve/main/data/train-00000-of-00001-a09b74b3ef9c3b56.parquet

# download tokenizer configs and (selective) weights from

# https://huggingface.co/yahma/llama-7b-hf/tree/main

# revise "LLaMATokenizer" as "LlamaTokenizer" in tokenizer_config.json (This is a bug of huggingface)

cd WORKSPACE

mkdir alpaca_preprocessed

python tools/preprocess_data.py --input WORKSPACE/alpaca/train-00000-of-00001-a09b74b3ef9c3b56.parquet \

--output-prefix WORKSPACE/alpaca_preprocessed/alpaca \

--tokenizer-type PretrainedFromHF \

--tokenizer-name-or-path WORKSPACE/llama-7b-hf \

--tokenizer-not-use-fast \

--handler-name GeneralInstructionHandler \

--append-eod

After preprocessing, there will be three bin files and three idx files in the WORKSPACE/alpaca_preprocessed dictionary. Then, we can train a model with --data-path WORKSPACE/alpaca_preprocessed/alpaca and --is-instruction-dataset flags.

In addition, we have developed the dynamic padding function based on the instruction dataset, which can be implemented using the --variable-seq-lengths flag.

Note that instruction dataset has a --handler-name GeneralInstructionHandler flag which will choose GeneralInstructionHandler class to create prompt in ascendspeed/data/data_handler.py.

If you have an alpaca-style dataset which have instruction, input and output columns, just use GeneralInstructionHandler.

In addition, BelleMultiTurnInstructionHandler is used to handle belle dataset,

MOSSInstructionHandler is used to handle MOSS dataset and LeetcodePythonInstructionHandler is used to handle Leetcode dataset.

Low-parameter fine-tuning

Lora

Now, we support Lora to fine-tune your models.

First, you need to install version 0.4.0 of the peft library, like this:

pip install peft==0.4.0

When torch==1.11.0, You can also choose to install from the source package in the GitHub repository, so you can modify the setup.py file to avoid some dependency issues.

Next, you just need to add this argument in your script to open Lora:

# Llama example

--lora-target-modules query_key_value dense gate_proj dense_h_to_4h dense_4h_to_h \

There are other Lora related arguments here, you can find their definitions in the PEFT library.

# Llama example

--lora-r 64 \

--lora-alpha 128 \

--lora-modules-to-save word_embeddings output_layer \

--lora-register-forward-hook word_embeddings input_layernorm \

Among them, the argument --lora-register-forward-hook is used to repair the gradient chain break caused by PP. It only needs to be set to the input layer of each PP stage, and the repair will not increase the trainable parameters. The argument --lora-modules-to-save is used for fine-tuning when expanding the vocabulary. If there is no need for this, there is no need to pass in this argument.

Finally, only Lora's parameters are saved after turning on Lora. Similarly, when loading a model, you need to specify the original model weight path and the Lora weight path. Parameters such as the optimizer are subject to those in the Lora weight path.

--load ${ORIGIN_CHECKPOINT} \

--lora-load ${LORA_CHECKPOINT} \

There is an example could be referred.

After using Lora to fine-tune the Llama model, the instruction dialogue effect is as follows:

You >> Give three tips for staying healthy.

AscendSpeed:

- Start exercising regularly and eat healthy food.

- Get a good eight hours of sleep each night.

- Take medications regularly.

If after completing lora fine-tuning, we need a model without lora structure, then we only need to run this script to merge the two model files --load and --lora-load, and generate a model without lora structure. The new weight model file is stored in the --save path.

Inference: human-machine dialogue

Currently, we support the following four cases of inference:

- PTD only

- DeepSpeed ZeRO only

- DeepSpeed ZeRO in PIPELINE with TP

- Model fine-tuned with lora

Quick Start

Here are some example scripts in different mode mentioned above for you to launch directly.

Please Note that:

-

If you want to use the weight from huggingface, please run the weight conversion script first. Take Llama-7B, for example:

-

PTD only

python tools/ckpt_convert/llama/convert_weights_from_huggingface.py --input-model-dir llama-7b-hf \ --output-model-dir llama-7b-tp2-pp2 \ --tensor-model-parallel-size 2 \ --pipeline-model-parallel-size 2 \ --type 7B -

DeepSpeed ZeRO only

python tools/ckpt_convert/llama/convert_weights_from_huggingface.py --input-model-dir llama-7b-hf \ --output-model-dir llama-7b-deepspeed \ --type 7B \ --deepspeed

-

-

You need to modify some variables in the shell script such as model weight path and vocab path.

- PTD only: In this mode, the model is split by pipeline parallel and tensor parallel mode in megatron ways.

sh examples/llama/generate_llama_7B_tp2_pp2.sh - Deepspeed ZeRO only: In this mode, the model uses DeepSpeed ZeRO 1, 2 or 3 definition with tp=1, pp=1.

sh examples/alpaca/generate_alpaca_13B_deepspeed.sh - Deepspeed ZeRO in Pipe with TP: In this mode, the model uses pipe model definition in DeepSpeed ZeRO 1, 2 or 3 with tp>1, pp=1.

sh examples/llama/generate_llama_7B_deepspeed_pipeline.sh - If you want to use lora model, for details, refer to:

sh examples/alpaca/generate_alpaca_13B_lora_deepspeed.sh

- PTD only: In this mode, the model is split by pipeline parallel and tensor parallel mode in megatron ways.

Some examples with Chinese-LLaMA-Alpaca-13B weights is as below

Usage Guide

Follow these steps to write your own inference code:

Initializing the Distributed Environment

initialize_megatron(args_defaults={'no_load_rng': True, 'no_load_optim': True})

Initializing model and loading weights

from modellink import get_args

from modellink.model import GPTModel

from modellink.arguments import core_transformer_config_from_args

def model_provider(pre_process=True, post_process=True):

"""Build the model."""

config = core_transformer_config_from_args(get_args())

init_model = GPTModel(

config,

num_tokentypes=0,

parallel_output=False,

return_moe_loss=False,

pre_process=pre_process,

post_process=post_process

)

return init_model

model = GPTModel.from_pretrained(

model_provider=model_provider,

pretrained_model_name_or_path="your model weight path"

)

"""

This is an API for initializing model and loading weight.

Parameters:

----------

model_provider(`func`):

Function used to generate model objects which is similar to the training define.

pretrained_model_name_or_path(`str`, *optional*, defaults to None):

File path of Model weight in megatron format (TP, PP may be used).

If it is None, the random initialized weights will be used.

"""

Generate text in HuggingFace-like ways

-

Greedy Search

responses = model.generate( "Write quick sort code in python", max_new_tokens=512 )

-

Do sample with top-k and top-p

responses = model.generate( "Write quick sort code in python", do_sample=True, temperature=1.0, top_k=50, top_p=0.95, max_new_tokens=512 )

-

Beam search with top-k and top-p

responses = model.generate( "Write quick sort code in python", num_beams=4, top_k=50, top_p=0.95, max_new_tokens=512 )

-

Beam search with top-k and top-p sampling

responses = model.generate( "Write quick sort code in python", do_sample=True, temperature=0.6, num_beams=4, top_k=50, top_p=0.95, max_new_tokens=512 )

Evaluation with Numerous Benchmarks

Quick Show

| Task | Subset | Model | NPU | Reference | Benchmark |

|---|---|---|---|---|---|

| BBH | Test | Llama7b | 0.334 | 0.333 | 0.335 |

| AGIEval | Test | Llama7b | 0.210 | 0.210 | 0.206 |

| HumanEval | Test | Llama7b | 0.128 | 0.128 | 0.128 |

| BoolQ | test | Llama7b | 0.742 | 0.742 | 0.754 |

| GSM8K | Test | Llama7b | 0.102 | 0.103 | 0.100 |

| CEval | Validation | Llama7b | 0.408 | 0.404 | / |

| MMLU | test | Llama7b | 0.333 | 0.324 | 0.351 |

Quick Start

# Configure model path and vocab_file path

# Vocab file can be downloaded from https://huggingface.co/yahma/llama-7b-hf

CHECKPOINT=../models/llama-7b-tp2-pp4/

VOCAB_FILE=../models/llama7b-hf/

# configure task and data path

DATA_PATH="dataset/boolq/test"

TASK="boolq"

# configure generation parameters

python -m torch.distributed.launch $DISTRIBUTED_ARGS evaluation_llama.py \

--task-data-path $DATA_PATH \

--task $TASK\

--seq-length 512 \

--max-new-tokens 1 \

--evaluation-batch-size 1 \

--max-position-embeddings 512 \

--tensor-model-parallel-size 2 \

--pipeline-model-parallel-size 4 \

--num-layers 32 \

--hidden-size 4096 \

--ffn-hidden-size 11008 \

--load ${CHECKPOINT[images](sources%2Fimages)} \

--num-attention-heads 32 \

--tokenizer-type PretrainedFromHF \

--tokenizer-name-or-path $VOCAB_FILE \

--tokenizer-not-use-fast \

--fp16 \

--micro-batch-size 1 \

--seed 42 | tee logs/train.log

# start evaluation

bash tasks/evaluation/eval_llama.sh

Task Introduction

The most important evaluation parameters must be --max-new-tokens, which means the output length of model generation. For example, multiple-choice

questions' output length is obviously shorter than coding tasks. Besides, this parameter largely decides the speed of model generation.

python -m torch.distributed.launch $DISTRIBUTED_ARGS evaluation_llama.py \

--task-data-path $DATA_PATH \

--task $TASK\

--seq-length 512 \

--max-new-tokens 1 \

--evaluation-batch-size 1 \

--max-position-embeddings 512 \

--tensor-model-parallel-size 2 \

--pipeline-model-parallel-size 4 \

--num-layers 32 \

--hidden-size 4096 \

--ffn-hidden-size 11008 \

--load ${CHECKPOINT} \

--num-attention-heads 32 \

--tokenizer-type PretrainedFromHF \

--tokenizer-name-or-path $VOCAB_FILE \

--tokenizer-not-use-fast \

--fp16 \

--micro-batch-size 1 \

--seed 42 | tee logs/train.log

BoolQ

BoolQ is a question answering dataset for yes/no questions. Each question contains a triplet of (question, passage, answer), with the title of the page as optional additional context.

The evaluation of the BoolQ data set is relatively simple, just configure TASK="boolq", --seq-length=512, --max-position-embeddings=512, --max-new-token=1.

The zero-shot results are usually affected by the given prompt, and a higher score can be obtained by a suitable prompt.

The prompt can be modified in tasks/evaluation/evaluation.py

# Update new prompt by changing the template

template = {instruction}

MMLU

Since MMLU is a multidisciplinary task and 5 shots are performed, the length of each subject question varies greatly. If you want to run 57 subjects at the same time, you need to set TASK="mmlu", --seq-length=2048, --max-position-embeddings=2048, --max-new-token=1.

On many websites, the accuracy of the MMLU is evaluated according to disciplines. The 57 categories of single subjects belong to four main categories. Therefore, the statistics should be summarized according to the major categories of the subjects. The website gives the major categories of subjects for 57 categories of subjects.

GSM8K

GSM8K is a dataset of 8.5K high quality linguistically diverse grade school math word problems created by human problem writers. The answer of each question is a specific number. Since few shots are performed, the question length is relatively long in GSM8K, and the output answer contains a chain of thoughts, it is necessary to configure TASK="gsm8k", --seq-length=2048, --max-position-embeddings=2048, --max-new-token=200.

HumanEval

HumanEval dataset is a handcrafted set of 164 programming problems designed to challenge code generation models. The problems include a function signature, docstring, body, and several unit tests, all handwritten to ensure they're not included in the training set of code generation models.

Since the answer of HumanEval dataset contains long codes, it is necessary to configure TASK="human_eval", --seq-length=2048, --max-position-embeddings=2048, --max-new-token=200.

AGIEval

AGIEval is a human-centric benchmark specifically designed to evaluate the general

abilities of foundation models in tasks pertinent to human cognition and problem-solving. This benchmark is derived from 20 official, public, and high-standard admission and qualification exams intended for general human test-takers, such as general college admission tests (e.g., Chinese College Entrance Exam (Gaokao) and American SAT), law school admission tests, math competitions, lawyer qualification tests, and national civil service exams.Since the length of answers to different type of questions varies, we have to configure TASK="agieval", --seq-length=2048, --max-position-embeddings=2048, --max-new-token=5 to fit the longest answer.

Big-Bench-Hard

Big-bench-hard dataset is a subset of big bench, which is a diverse evaluation suite that focuses on a suite of 23 challenging BIG-Bench tasks. These are the task for which prior language model evaluations did not outperform the average human-rater. This dataset covers multiple areas including text understanding, reasoning, logical reasoning, mathematical reasoning, and common sense reasoning.

Except word_sorting, all datasets are multiple-choice questions. So we can set TASK="bbh", --seq-length=2048, --max-position-embeddings=2048, --max-new-token=32,--evaluation-batch-size=4.

CEval

As C-Eval shows, C-Eval is a comprehensive Chinese evaluation suite for foundation models. It consists of 13948 multi-choice questions spanning 52 diverse disciplines and four difficulty levels, as shown below. You may explore our dataset examples at Explore, or check our paper for more details. The dataset contains validation and test data, however, only validation data has label for auto-evaluation. If

you want to evaluate on test data, you should email your results to C-Eval. We can set TASK="ceval", --seq-length=2048, --max-position-embeddings=2048, --max-new-token=1.

Configuration of models and datasets

As the example shown below, we want to use llama7b model for BoolQ dataset evaluation, so the model path and vocab file should correspond to llama7b model. Model can be segmented with suitable segmentation parameters: the following example set tensor-model-parallel-size(tp) = 2 and pipeline-model-parallel-size(pp) = 4. Segmentation example shows as followed:

python convert_weights_from_huggingface.py \

--input-model-dir /home/w425040/models/llama-7b-hf \

--output-model-dir /home/w425040/models/llama-7b-tp2-pp4 \

--type 7B \

--tensor-model-parallel-size 2 \

--pipeline-model-parallel-size 4

Then, configure dataset path and task. Note: since the evaluation parameters of different datasets are not totally same, it is not recommended to evaluate two or more different datasets together. Evaluation parameters such as --seq-length, --max-new-tokens and --max-position-embeddings need to be adjusted to datasets. The recommended parameters for each dataset will be given in the following instruction.

# configure model path and vocab_file path

CHECKPOINT=../models/llama-7b-tp2-pp4/

VOCAB_FILE=../models/llama7b-hf/

# configure task and data path

DATA_PATH="dataset/boolq/test"

TASK="boolq"

# configure generation parameters

Introduction For Acceleration Features

Tensor Parallelism

Tensor parallelism (TP) is a kind of model parallelism strategy, which splits execution of a single transformer module over multiple devices. The basic principle of PP is:

--tensor-model-parallel-size flag to specify the number of GPUs among which to split the model.

(Virtual & Optimized) Pipeline Parallelism

Pipeline parallelism (PP) is a kind of model parallelism strategy, which shards the transformer modules into stages with an equal number of transformer modules on each stage and then pipelines execution by breaking the batch into smaller microbatches. Virtual pipeline (VP) parallelism optimizes PP by add virtual stages to reduce pipeline bubble time. Optimized Pipline Parallelism (OPP) is an enhanced version of VP, which further reduces the bubble time by reasonably setting the size of each microbatch. The basic principle of PP and VP is:

To enable pipeline model parallelism, use the --pipeline-model-parallel-size flag to specify the number of stages to split the model into (e.g., splitting a model with 24 transformer layers across 4 stages would mean each stage gets 6 transformer layers each).

To enable virtual pipeline parallelism, additionally use --num-layers-per-virtual-pipeline-stage flag to decide number of layers per virtual stage. Currently, the repository supports VP in the form of VP + no-overlap-p2p, and when you want to enable VP, you need to add the --no-overlap-p2p-communication parameter to disable overlap-p2p.

To enable optimized pipeline parallelism, additionally use --optimized-pipeline and --manual-mbs example-config-1 flag based on PP. Note that both VP and OPP reduce bubble time, but increase communication time.

Fold3D

Fold3D hides the commutation time of data parallelism in VP. The basic principle of Fold3D is:

--fold-mode "aiao" flag to choose strategy.

(Selective) Recomputation

To reduce NPU memory usage so deploy a large model to a training system, we support activation checkpointing and recomputation.

We support two levels of recompute granularity: full and selective. To enable full recomputation, please use --checkpoint-activations flag, and to enable selective recomputation, please use --checkpoint-policy flag to

decide the strategy of selective recomputation. Note that the selective strategy is customized and optimized for Ascend chips.

To improve the performance of model training while maximizing the use of NPU memory, we support auto selective recomputing strategy by manually adjusting the training memory size.

To enable auto selective recomputing, please use --auto-recompute-device-size flag to specify the memory size for auto selective recomputing strategy(unit: MB).

Note that if you want to use --auto-recompute-device-size flag, please remove --checkpoint-activations.

If OOM occurs, you need to reselect a new memory size to restart model training. You can also find the optimal solution through dichotomy.

Auto selective recomputing selects a strategy based on the training memory information of the first N steps of profiling. You can set the number of steps to stop profiling by using the --auto-recompute-profiling-step flag.

By default, profiling is stopped in step 10, with a minimum setting of 5 steps. It is recommended to stop profiling after the training memory is stable, in order to obtain a better choice of recalculation strategy.

Sequence Parallelism

Sequence parallelism (SP) is a kind of model parallelism strategy, which splits the sequence axis in dropout and layernorm layers. SP depends on TP in our implementation. The allreduce operation in TP is split to reduce-scatter and allgather by SP, which reduces the memory occupation in model training. The basic principle of SP is:

--tensor-model-parallel-size flag should be lager than 1, and set --sequence-parallel flag.

ZeRO-1/2/3

Zero Redundancy Optimizer (ZeRO) is a kind of memory-optimization strategy in data parallelism proposed by MicroSoft. ModelLink supports ZeRO-1/2/3 by adding a deepspeed branch. The basic principle of ZeRO is:

--use-distributed-optimizer flag.

Inverted Triangle Acceleration

Inverted triangle acceleration is an acceleration module for attention calculation, which implements flash attention with python. Basically, the calculation of self-attention takes all of the attention mask into consideration. For this scenario, inverted triangle attention acceleration algorithm is used to avoid blocks that do not need to be calculated in the upper triangle position in the attention mask, thereby reducing the amount of calculation. The calculation process is:

--triangle-attn flag.

Optimizer

For LLMs, Ascend chips support various fused kernels, such as scaled_masked_softmax and rotary_pos_emb. The related examples can be found by searching in this project, and more detailed information is coming soon.

For fused optimizer, two kinds of fused adam optimizers are provided by --optimizer. Specifically, the choice --optimizer adam saves more memory, and the choice --optimizer fused_adam trains faster.

Merged Feed-Forward Network & Gradient Accumulation

For llama and other LLMs without bias in FFN, the linear transformation in FFN could be merged to save communication in tensor parallelism. To enable this feature, please set --mlp-layer-fusion flag. Gradient accumulation uses gradient of N rounds to make an optimizer step and update parameters. Here, N = global batchsize / micro batchsize / DP, and DP = device nums / tp / pp.

Memory Overcommitment

In mix precision training, multiple state tensors, such as parameter copies, gradient copies, and optimizer states, occupy a large amount of static memory (16N, where N is the number of parameters). However, in fact, parameters and gradients (4N, N is the number of parameters) that participate in forward and reverse calculation account for a small proportion, and optimizing the preceding state tensors can bring great video memory benefits. By analyzing the actual use of each part of the state tensor, the memory reuse mechanism of the mechanism is obtained, and a multilevel optimizer memory optimization scheme integrating multiple algorithm modules is finally obtained.

- Memory Overcommitment O1 —— Relase FP32 Gradient

- Advantages: Completely equivalent; Support for multiple optimizers; lossless performance.

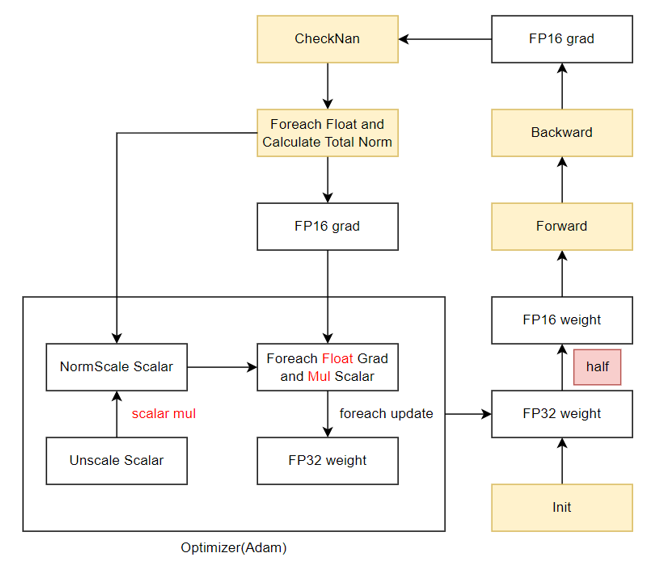

- Algorithm principle: The static memory of the FP32 gradient copy that needs to be permanently stored is reused. The memory of the FP16 gradient is converted into the FP32 format by performing the Foreach+Cast operation when necessary, saving 4N space.

- Usage: This equivalent algorithm is applicable to all optimizers and can be triggered by specifying

--release-fp32-gradin the script. - Restrictions: Currently, only the Adam optimizer is applicable. For other optimizers, see the Adam optimizer implementation.

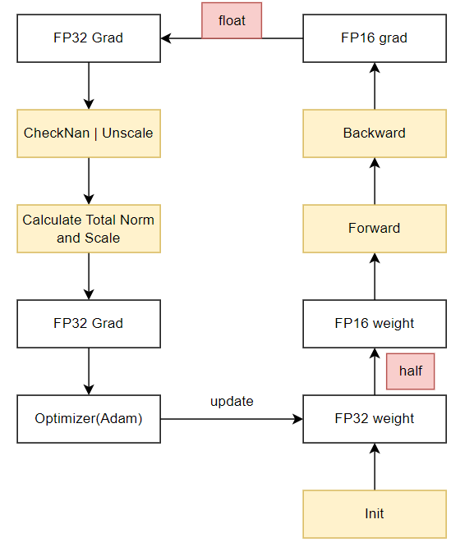

Mix precision training process:

Memory overcommitment training process:

Test Result Table:

| Model | Algorithm | Performance | Compress HBM | Performance Error | Precision Error | Hardware |

|---|---|---|---|---|---|---|

| LLama-7B | baseline | 5.39s/iteration | -- | -- | -- | 910B*8P |

| O1 algorithm | 5.40s/iteration | ↓ 13.5% | ↓ 0.17% | < 0.05% | 910B*8P | |

| LLama-13B | baseline | 8.95s/iteration | -- | -- | -- | 910B*8P |

| O1 algorithm | 8.92s/iteration | ↓ 14.90% | ↑ 0.34% | < 0.2% | 910B*8P | |

| LLama2-7B | baseline | 6.48s/iteration | -- | -- | -- | 910B*8P |

| O1 algorithm | 6.48s/iteration | ↓ 10.87% | ↓ 0.00% | < 0.2% | 910B*8P | |

| Bloom-7B | baseline | 5.45s/iteration | -- | -- | -- | 910B*8P |

| O1 algorithm | 5.49s/iteration | ↓ 12.68% | ↓ 0.7% | < 0.01% | 910B*8P | |

| LLama-32B | baseline | 5.23s/iteration | -- | -- | -- | 910B*16P |

| O1 argorithm | 5.28s/iteration | ↓ 15.93% | ↓ 0.95% | < 0.02% | 910B*16P | |

| LLama-7B | distributed baseline | 5.18s/iteration | -- | -- | -- | 910B*8P |

| O1 distributed algorithm | 5.19s/iteration | ↓ 9.50% | ↓ 0.2% | < 0.1% | 910B*8P |

Acknowledgments

ModelLink is jointly contributed by the following departments of Huawei Corporation:

- Ascend Computing Product Unit

- Algorithm Unit of Computing Product Unit

- Research Unit of Computing Product Unit

- Open Computing Kit of Computing Product Unit

- General Development Department

- Global Technical Service Department

We appreciate every PR from community, and welcome to contribute to ModelLink.

Appendix

- Inner Function Description: Here are some inner implementation interface introduction InnerInterface

- Parameters Description: Here are some parameters description and usage param.

- Permission Description: It is recommended that the umask value of Linux be greater than or eqaul to 027. Before running the program, you are advised to take security measures such as permission control for files required for training, such as ckpt, logs and so on. You are advised to run the program or execute commands as a regular user not as root or super user. Also, you are advised to set the folder permission to 750 and the file permission to 640. When multiple users share datasets, set the read and write permissions for folders and files based on the minimum permissions to avoid security problems such as unauthorized access.

- Path Description: When you're using interface such as

torch.load, unless weights_only parameter is set to True, uses pickle module implicitly, which is known to be insecure. It is possible to construct malicious pickle data which will execute arbitrary code during unpickling. We don't suggest you load data that could have come from an untrusted source in an unsafe mode, or that could have been tampered with. Please load data you trust. Moreover, when you need to read data from outside or your specified path you'd better make it trusted and safe, including but not limited to weights path, dataset path. - Communication Matrix: Please refer to this link to check the communication matrix.

- Public Network Address: Here is the Public Network Address.